Multimodal Search SEO: How to Stay on the Feed When AI Sees Everything

Search once was straightforward. You typed some text, Google ranked the results, and you clicked. But in 2026, that idea already feels outdated. Searching is no longer something you “do”; it is something you “show.”

People now talk on their phones, take pictures of products, record their problems, or point their cameras at things they can’t explain.

Search engines and AI assistants now see, hear, and interpret the world essentially as a human does—and this is changing the landscape of multimodal search SEO for every brand trying to remain discoverable.

To conclude, we will outline the new optimization framework—incorporating modular content, multimodal metadata, answer-first design, and KPIs that matter beyond just clicks. By the end of the discussion, you will know exactly how to show up in a world where search looks more like conversation than keywords.

A new front door: results that answer, not just link

Multimodal (think Google AI mode and Google Lens, as well as other advanced visual and voice engines) do not simply send a user to a webpage but provide them with answers during the actual search experience.

They use conversational dialogue instead of web pages. To use multimodals to search a user uploads a photo, types in a short text message, or speaks a question aloud; the search engine will respond with a combination of text, images, video snippets, links to products, and sometimes an embedded shopping experience.

These “one-stop” solutions reduce the number of clicks a user must make and change how and where brands attract attention.

The emergence of real multimodal search SEO has changed the way consumers discover brands from the ground up. By allowing engines to use their AI capabilities to create contextual relationships between the text, image, audio, and video, every asset your brand produces creates a potential search signal.

Why that is important for SEO: visibility is now multidimensional

Traditional SEO signals (such as page authority, backlinks, and semantic headings) remain relevant — but they are not sufficient. In a multimodal world, visibility depends on how your content performs across modalities:

- Are your images recognizable and understandable within a visual model?

- Do your videos have clean, searchable transcripts and scene metadata?

- Are your product images shoppable and tagged with structured data?

Does your existing copy provide an AI with adequate context to mash together video, voice, and text into a useful answer?

If one modality is missing or poorly optimized, you’re not at the multimodal table. Search engines are using “fan-out” techniques—turning one input (an image or mixed query) into a number of internal queries to find the best multimodal answer.

This means that with each asset you publish, you have a possible entry point. Optimize the assets, and you have expanded your network.

Practical wins: what to do to kick off multimodal search SEO

Begin with high-impact, low-effort adjustments that will help your content become multimodal-search-friendly today—so search engines can see, hear, and understand your assets better!

“Search is no longer just about climbing Google’s blue links—it’s about staying visible in an ecosystem where AI platforms are answering user queries directly.”

We at AdPulse have maintained that businesses who produce the most comprehensible content are rewarded by multimodal search, not those that produce the most material.

Here’s what that looks like in the real world in 2026:

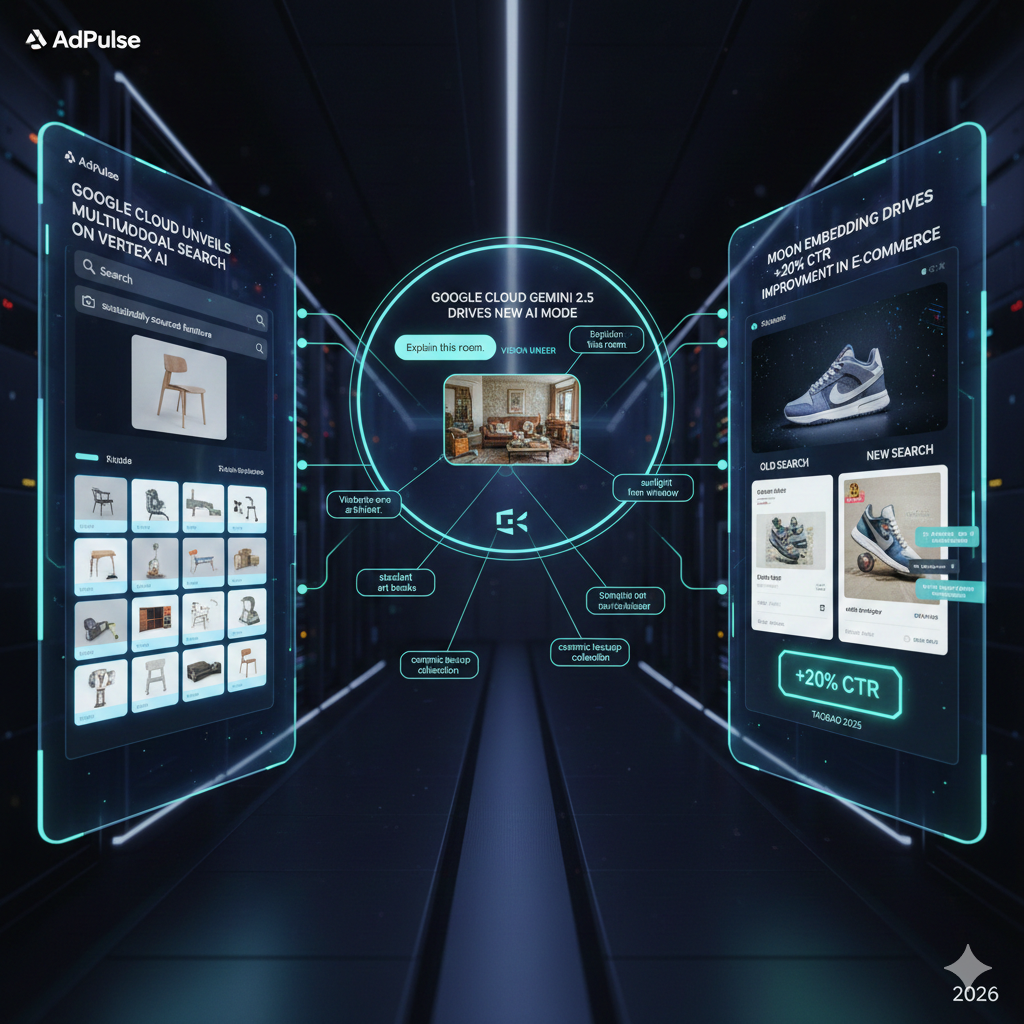

Google Cloud Unveils Multimodal Search on Vertex AI: In January 2025, Google Cloud launched an offering on Vertex AI that allows you to create search engines that leverage text + image queries — generating richer and more intuitive visual results.

Google Cloud Gemini 2.5 Drives New AI Mode With Vision Under: Google’s Gemini 2.5 model, AI Mode has the ability to “see” images and respond in a dialogue-format — breaking the scene down using a fan-out method to produce nuanced, contextual responses.

MOON Embedding Drives +20% CTR Improvement in E-Commerce: Taobao deployed a MOON multimodal embedding system into their search ads in 2025 that drove a +20% click-through rate improvement via a joint model of visual features and text.

What we focus on next:

- Make use of high-quality photos: That provide object-level metadata (such as product IDs, colors, materials, etc.) and captions and alt text that provide context. Timestamps, keyframes, and powerful thumbnail pictures enable AI to highlight the appropriate parts of your movie.

- Auto-generate and human-edit transcripts: Make a clear transcript with speaker labels and succinct summaries for each video and podcast. This converts spoken content into searchable formats that are multimodal and text-first.

- Utilize structured data aggressively: Provide product schema, video schema, and image. Object markup and custom attributes for visuals. Structured data is what allows models to match your assets relevant to multimodal queries.

- Create modular content: Build small reusable content, like short text blurbs, 10-20 sec video clips, and images of single objects. Modular content is more likely to appear in responses with mixed-format answers in shopping cards.

Content strategy becomes conversational and situational

Multimodal queries are often situation-rich: “Here’s a photo of my living room; find me curtains that would work, and where would I get them?” or “I recorded this meeting—find me the key decisions for next steps.” That pushes content strategy away from a one-off keyword page and toward situational assets—content that helps users complete a concrete task.

Think of instructional short videos in a step-by-step format, before-and-after galleries, how-to carousels, and compact comparison tables of products that an AI could easily take and present as one piece of valuable information. Search engines reward usefulness, and when you make it easy for them to extract and repurpose your content, you will create visibility.

The measurement dilemma: clicks are no longer the sole KPI

Traffic measures (organic sessions) will appear differently if there are answers within the search experience. Anticipate an increase in “zero-click” situations, in which consumers get what they require without visiting your page. You still own the brand moment, therefore it’s not intrinsically negative; you just need to take some additional steps:

- Answer lift: The measure of how often your assets contributed to an AI answer.

- Asset engagement: clicks or actions taken downstream after a multimodal result (store visit, add-to-cart, signups).

- Attribution across modalities: taking the impression of a visual and converting it later.

Some tools and platforms are beginning to report these signals (GEO – generative engine optimization – dashboards are starting to emerge), but a firm still optimizing clicks will not understand the true value measurement.

To summarize: SEO in 2026 resides in the brands machine can equate to trust and understand

Multimodal search transformed SEO into a game of ecosystem, where credibility, provenance, and machine-readable assets outweigh keyword density. Brands that commit resources to articulate clear attribution, structured metadata, credible visuals, and quality multimedia will become the first sources AI agents like to use when assembling their answers. Visibility will be sent to content that is trustworthy and easy to process for machines.

To win, teams need talent that can think beyond the text—people who can tag images, create short-form video, engineer schema, modularize content, and ensure assets are secured with licensing metadata and canonicalization. Now is when you start applying the fundamentals and get your brand rights to claim your ‘real estate’ when multimodal moments happen in 2026. Engines are not just reading anymore; they are watching, listening, and reasoning. Be sure your brand speaks all language engines understand.

Cut to the chase

In 2026, brands that are successful at SEO are not necessarily the brands that people can easily understand, but the brands that can be trusted by machines. Make content clear, well-structured, and prepared for the multimodal future benefit—or perhaps you’ll just be completely annihilated by the AI-generated answers. Start optimizing your future today and own the multimodal search moment before your competitors.